How might AI agents create space for planning before action?

In everyday life, we rarely ask someone to act on our behalf without first taking time to think things through. We test assumptions, ask “what if?”, and sit with uncertainty before committing. For example, if we’re planning for retirement, we might want to look at a few different scenarios – age of retirement, pension options, and choices about our assets – before deciding what to do.

This post explores some of the ways we could bring that same separation between planning and doing into agentic AI services – creating distinct spaces for contemplation, planning, and action.

Why it’s important to separate planning from doing

We recently carried out user research with human “agents” – people like carers, teachers, and charity workers – to understand how agency already works in the non-digital world.

As part of this research, we visited a Maggie’s centre for cancer care. We observed staff deliberately delaying action, giving people time to talk and explore before anything concrete happens. That pause is an essential part of the care they offer.

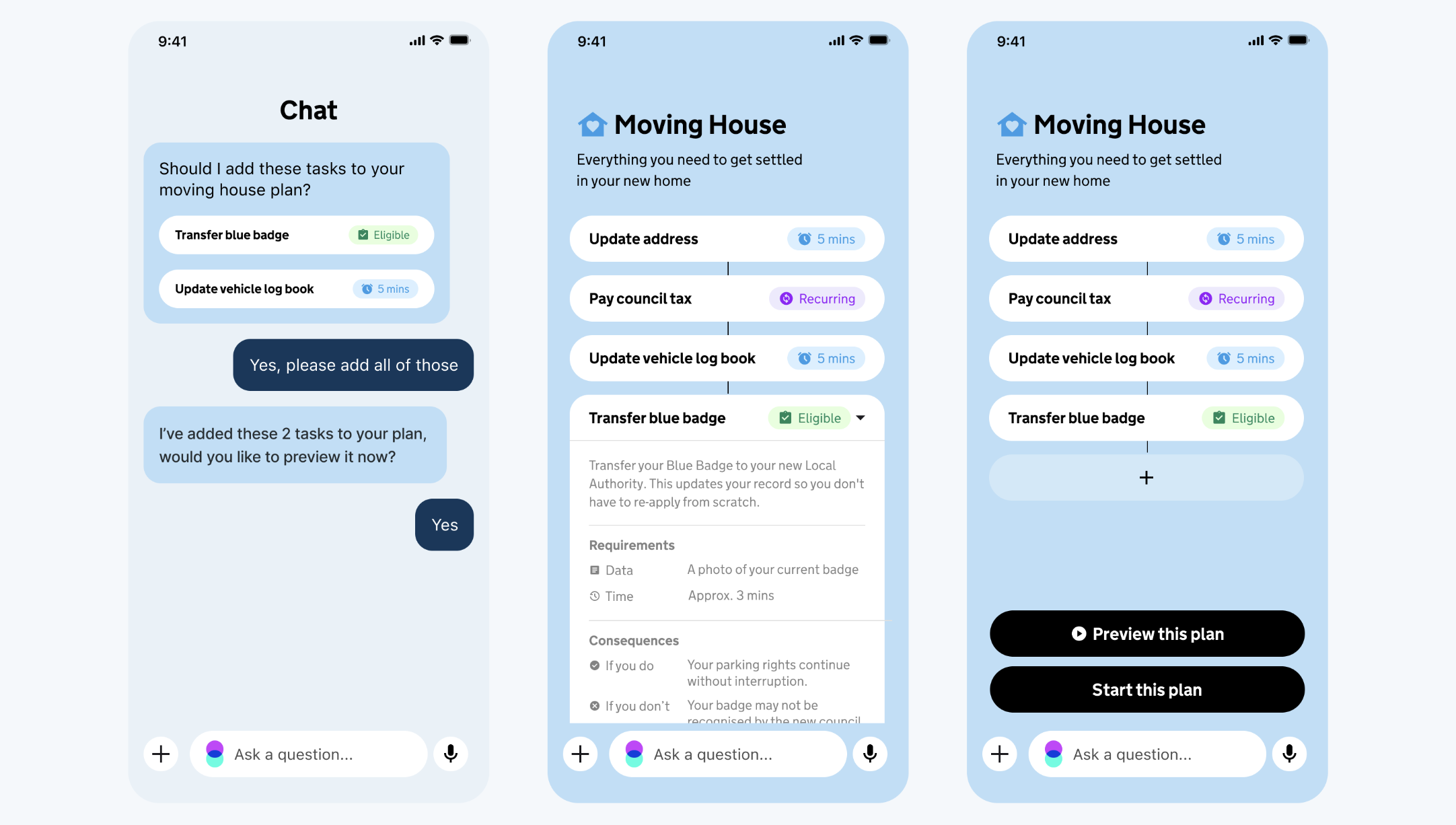

One way to recreate this separation in the context of an AI agent-enabled digital service might be to invoke a visual distinction between off the record “planning” and on the record “doing”. Using design and content cues, we can help users understand when they’re speaking in a private capacity, or when they’re submitting information to GOV.UK.

How might AI agents create space for contemplation?

We are exploring the idea that the plan is not just a list of tasks – see our post on exploring how to represent tasks – but a space where contemplation happens. Assembling a plan, questioning it, and adjusting it are all important parts of preparation.

A plan can be started, stopped, paused, and changed at any point. It is not a contract. It is a working space – and moving from plan to action should always be a deliberate choice.

How might users transition from planning to commitment?

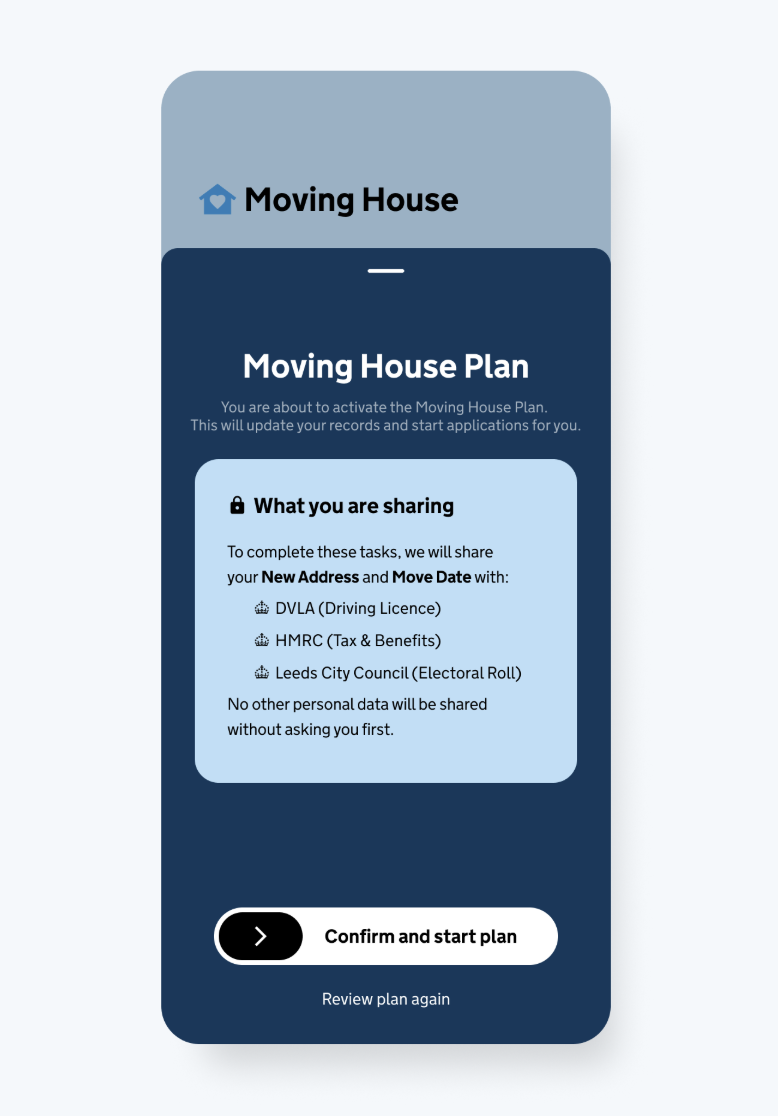

We’re starting with the principle that the transition from plan to action is made clear. This would help users to understand what is being shared, what happens next, and what the outcomes are.

In the user interface, a swipe gesture or button selection could provide a way to represent the significance of this moment.

Creating space for planning: what comes next?

Designing a clear boundary between thinking and doing is an important part of helping people to stay in control when working with AI agents.

The ideas shared here help us to imagine and explore the future of AI-enabled government services, and identify the challenges to solve.